For the last six months I’ve been trying to implement Cisco’s C Class rack mount servers into the existing UCS infrastructure. It hasn’t gone well, especially when considering the low baseline which is run VMware in a VDI cluster and/or install Windows onto its own local storage. To be fair, I have been able to use the four M4 C240 servers in a boot from SAN scenario, so it isn’t all bad.

In the interest of management convergence, Cisco enabled a new feature in UCS firmware bundle 2.2.5a allowing storage profiles to be used with local disks on rack mount servers. This seems like a good idea if you have several rack mounts with the same hardware configuration. The UCS would create the virtual disks on the rack mount servers in whatever RAID configuration needed. In earlier UCS releases, rack mount local storage had to be configured through the server’s RAID controller. You might want to stick to that plan for now.

I opened a ticket with Cisco TAC after continually getting an “Incomplete LUN Configuration” error when trying to apply a customized service profile to a M4 C220 rack mount server. Another curious behavior was a warning from the UCS that Disk 9 was inoperable. There is no Disk 9 because the server only has eight slots, so yeah, it’s inoperable alright. Still, that bothered me a bit because I couldn’t be certain that the phantom disk wasn’t causing the problem in the storage profile or the disk group policy.

The Storage Profile is used to name and size the LUN(s). The Disk Group Policy is referenced in the Storage Profile with the number of physical disks to use in the LUN(s), the type of disk and the RAID level. They work together to configure the local storage on the server after the entire Service Profile is applied successfully.

I created a Storage Profile with two LUNs, one for the system files (Linux installation) and the other for data. I checked the box “Expand to Available” for the system LUN. In the UCS documentation it does state that you can only have one LUN use the expand feature in a storage profile.

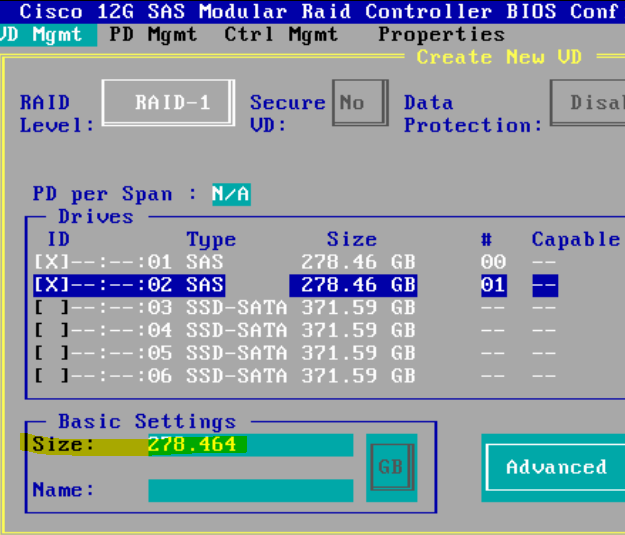

I then created two Disk Group Policies. The system LUN would use the only two HDDs and be RAID 1 mirrored. The data LUN would use the remaining SSDs in a dual parity RAID 6 volume. In the Storage Profile I selected the appropriate Disk Group Policy for each LUN and saved. Lastly, I added the Storage Profile to the Service Profile and off we go to nowhere.

Here’s a list of what doesn’t work in the UCS as it tries to manage the rack servers. TAC said these were undocumented and may be bugs:

Inventory:

Cisco could not remove the non-existing Disk 9 from the inventory list. TAC verified the eight slots in the RAID controller BIOS and shrugged.

Storage Profiles And Disk Group Policies:

- The LUN sizing is not logical. The overhead for a RAID 1 configuration is 50%, since one full disk is used for parity. If I configure the virtual disk in the RAID Controller BIOS (a virtual disk is a LUN to the UCS) in a RAID 1 mirror it correctly gives me half of the total disk space for use.

In the UCS, using the “Expand to Available” check box would error out during an association attempt with:

If I manually entered the correct amount (278GB) it would fail the same way. The only way to get the service profile to associate was to use a lesser amount then the maximum. I didn’t do that. It’s maximum or nothing, dammit! And, it’s really a guessing game. A setting of 250GB would associate, but would 266? 270? I don’t know.I was obeying the UCS documentation at this point by using the “Expand to Available” option on only one LUN in a Storage Profile. I should have seen that as a warning anyway. Why would I want to do that on just one LUN?

- Automatic Group Configuration doesn’t work.

In the Disk Group Policies I wanted to use all the HDDs (two) for a RAID 1 disk. No can do!

I got the same error when choosing all the SSDs for a RAID 6 disk. Cisco said that the auto disk configuration by type wasn’t fully cooked yet.

I can make this work IF I lower the maximum amount for the RAID 1 volume, guess what could be an acceptable amount for the RAID 6 volume (lower than the maximum, obviously) and manually choose the disks.

I chose to configure the volumes directly on the RAID controller. After doing that I applied one of my base service profiles (without storage parameters beyond HBA configurations) successfully.

Cisco didn’t elaborate on whether the latest firmware (2.2.1b or 2.2.6a) would fix these problems. I’m guessing not, since upgrading firmware is usually one of the suggested options.

Categories: Cisco

Hi, running into this exact same issue, thought I was going nuts for a bit!

Have you had any resolution to this problem? Nothing I have tried will let me apply the profile.

Hello-

No, there has not been resolution that involves UCS storage management. What I did to workaround the problem is create the LUNs on the Server’s RAID controller. In the UCS I assigned a server profile that had no local storage.

have you tested with the latest firmware (3.1)? I just tested and things appear to be much better. Being able to configure the internal PCH controller for boot drives and the 12G SAS controller for the front facing drives is extremely valuable at scale! The one thing that the admin will still need to do at this point is to mark new HDDs as unconfigured good for the service profile to leverage before applying the changes.

Thanks for the info. No, we haven’t moved up to 3.1 yet. We may be forced to for our NVIDIA GRID implementation anyway.