I had some problems introducing M4 blades and a C Series (C240) server into an existing UCS infrastructure. All the new servers had VMware ESXi 5.5 installed on them and were vCenter cluster members. There was one networking issue having to do with the VMware management network. In vCenter, these hosts would drop offline sporadically, causing themselves to be in a network partitioned state. Interestingly, I could ping the partitioned hosts from other VLANs, but I couldn’t ping successfully from the functioning VMware hosts’ kernel vNICs on the management VLAN.

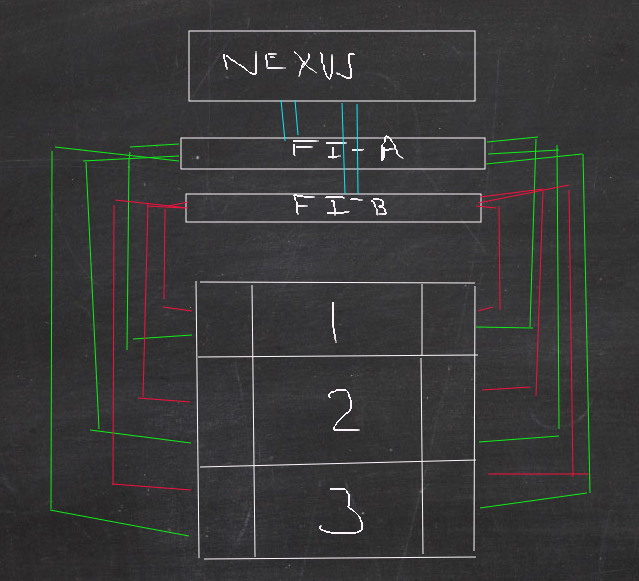

A brief explanation of the setup: VMware ESXi 5.5 is installed on Cisco B200-M3 and B200-M4 blades in three Cisco 5108 chassis. The UCS Service Profiles are assigning 9 vNICs to each ESXi host. The even number vNICs are pinned to FI-A (Fabric Interconnect A) while the odd number vNICs are pinned to FI-B. The C240 rack mount server is connected to the FI’s with two Cisco mobo bound VICs with one VIC in each FI. The FI’s connect to a northbound Cisco Nexus switch. To the chalkboard:

The red and green lines represent how each chassis (numbered 1,2,3) are connected to the FI’s through the IO modules.Each chassis has two IO Modules (the boxes to the left and right of the number in the diagram). Green connections go to FI-A and red to FI-B. The FI’s have port channeled connections to the Nexus (blue lines). The FI’s have a non-represented physical cable between them but it does not pass network traffic, just configuration and status information for fail over. Also not pictured is the C240, it has four connections, two in each FI and is managed by the UCS.

In the VMware configuration, specifically the management network, I have two vNICs (0 and 1) in Active-Active mode and load balanced. So, vNIC 0 is pinned to FI-A and vNIC 1 pinned to FI-B per the UCS Service Profiles. The management network is generally in a vCenter managed vDS, but I also had some new M4’s on a standard virtual switch with the same Active-Active configuration.

After various calls with VMware (worthless- I can reboot the host on my own, thanks) and with Cisco (helpful) it was becoming clear that we had a MAC address problem somewhere. The main hint was removing one of the VMware vNICs from the Active state and putting it in Stand-by. When the host was using just FI-A (vNIC 0 on the VMware host) it could communicate with the cluster members and vCenter. Adding back vNIC1 (FI-B) would cause the sporadic network drops.

The confirmation came from Cisco as they wanted to see the MAC tables cached in the Nexus. The FI’s are supposed to refresh these tables, but “sometimes they don’t”.

The MAC addresses on the VMware host vNICs are assigned by the UCS, so there isn’t any MAC translation going on anywhere in the infrastructure. What DOES have to happen is the MAC addresses traversing the entire infrastructure, specifically northbound to the Nexus.

MAC traversal from the VMware host’s (VM) vNICs through the infrastructure to the north point Nexus.

The red and green boxes represent the MAC addresses and where they need to reside for data sent from one FI to get to another. If a MAC is missing at the north point then the data packet is dropped because the destination is unreachable.

What we have here is data hair-pinning which isn’t optimal, but it is necessary in most environments and exposed the MAC address traversal problem. Since I have VMware load balancing the management network I will have data flowing over both FI’s and I will also have data sent from a vNIC pinned to FI-A bound for a vNIC pinned to FI-B:

A simplified look at data hair-pinning when sending data from FI-A to FI-B and vice versa. The blue boxes represents a vNIC on a VMware host, each pinned to a different FI.

The MAC addressing problem for the newer M4 blades eventually got solved by creating new service profiles for the blades in the UCS. Apparently, profiles can get corrupted. And why not? Corruption is everywhere! However, for the C240 rack mount server, it was still unable to stay on the management network and we were considering using it for something else besides a VMware host.

I was talking about this problem with a Trace3 consultant team and they mentioned a well used option they had learned through several VMware on UCS installs: Remove VMware vNIC teaming and with it, the hairpin. Hmm… so I would be assigning certain traffic types (replication, vmotion, guest, management…etc) to certain FI’s and the only way said traffic would get to the other FI would be through Cisco layer fail over. The other benefit to this design would be speed as the Nexus wouldn’t be taxed with FI-FI traffic. I figured the speed difference would be minimal with a 10Gb backbone but I could see the logic in simplifying data flows. Also, when arguing about the speed of the backbone did I really need to combine the VMware host’s vNICs into a team?

The Design:

This is a pretty simple change. I’ll be leaving the UCS profiles alone and working on the lowest virtualization tier: VMware. I want to make sure that vMotion and Management network traffic are not going to hairpin through the Nexus switch. On the port groups in the vCenter dVS’s assigned for those traffic types I’ll change the Teaming and Failover policy:

Changing the Failover Order of the Uplinks is the only step needed to force traffic through one FI. Remove one of them from Active and into Standby.

Using the above example, all VMware hosts assigned to this dVS portgroup will use Uplink 2 for data transfer. In my case, Uplink 2 has vNIC 1 attached to it. Since all of my odd numbered vNICs are pinned to FI-B, I know all traffic will use FI-B unless it fails.

Let’s talk about failure for a few sentences. I talked about using VMware or Cisco for failover in this post. I’m still using VMware so there will be no changes to the fail detection. I’ll keep the portgroup Failover Detection method as Link Status. If a FI fails so will the link, so VMware will switch to the standby adapter pinned to the other FI.

Once I made these adjustments, the C240 server has been working as expected. I put it back in my VDI production cluster and it’s been playing nice with other cluster members. As for vMotion speed, it’s a bit faster for sure. I mean, it was pretty damn fast before but now it’s blazing.

None of these changes should be necessary, technically all of the Cisco equipment should just work with one another. I do like the idea of controlling data flow since it isn’t easy to verify paths even with the available Cisco UCS utilities.

We are having very similar issues. Do you have a Cisco case number that can be referenced?

Brian-

You can reference this case number: 635659549. Cisco ended up replacing the C240 server with another one. After this case was closed, I had multiple failed vmotion tasks that Cisco attributed to the CPU model in that particular server. The new server has worked fine since. The M4’s are still working fine as well.